I recently wrote a blog post where I spent some time trying to build out nested environments by cloning an esxi template directly in vSphere. This didn’t quite work out as expected for a number of reasons: mac address persisted to the clone, storage uuid persisted, and the overall system uuid seem to persist into the clone. Along the journey of trying to solve those issues and get my automated nested setup, I discovered the kickstart script that is available for installing vSphere ISOs and automating the setup.

You can read more about the Kickstart script on the VMware official page. Basically it is a simple ks.cfg script that you inject into the ISO, and when you create the VM and attach the iso as a cdrom drive, it will handle the installation, as well as network settings, ntp, dns, and regeneration of certificates (needed for VCF). Basically any esxcli command that you would normally run, should be available via this startup script.

So this blog post covers the KS.CFG file, as well as the ansible required to setup some nested esxis. The automation will create the ISO and automatically create the VM on the physical ESXi host. After booting up the VM, the KS.CFG script will install vSphere and set the network settings. You can loop through and create many nested esxi hosts with this script.

Software Versions used in this demo

| Software | Version |

|---|---|

| vSphere ESXi | 8.0 U2 |

| Ansible | 2.12.10 |

| Ansible Galaxy | 2.12.10 |

| Ansible Python Interpreter | 3.8.10 |

| Jinja | 2.10.1 |

| xorriso | 1.5.2-1 |

Overview

There’s a few sections we’ll cover in this blog.

- Review the KS.CFG file

- Review the ansible

- See the automated esxi deployment in action

Review the KS.CFG file

The kickstart configuration file is used to prep the esxi host and runs on boot of the ISO. So we need to create this file, modify the properties as needed, and inject the file into the ISO. Finally we’ll need to reference the KS.CFG file so it can be injected.

Special Note: KS.CFG file is case sensitive. So make sure you put the filename in all CAPS, or it will fail to load. There, I just saved you 2 hours of troubleshooting.

So let’s take a look at the flow of the KS.CFG file

- Accept the EULA agreement for install

- Set the root Password

- Install ESXi onto the first available disk

- Set some network settings like dhcp or static IP addresses.

- Reboot

- Enable ssh (mostly just for testing and troubleshooting. Not required)

- Suppress some warnings

- Disable CEIP

- Set the DNS server

- Regenerate the certificates (Used for VCF)

- Set NTP servers and enable NTP on boot

- Reboot

# Accept the VMware End User License Agreement

vmaccepteula

# Set the root password

rootpw "SUPERSECRETPASSWORD123"

# Install ESXi to the local disk

install --firstdisk --overwritevmfs

# Host Network Settings

# network --bootproto=dhcp --device=vmnic0

network --bootproto=static --addvmportgroup=0 --ip=192.168.3.16 --netmask=255.255.255.0 --gateway=192.168.3.1 --nameserver=192.168.3.6 --hostname=esxi-host1.home.lab

# Reboot ESXi host

reboot

# Open busybox and launch commands

%firstboot --interpreter=busybox

# Enable & start remote ESXi Shell (SSH)

vim-cmd hostsvc/enable_ssh

vim-cmd hostsvc/start_ssh

# Enable & start ESXi Shell (TSM)

vim-cmd hostsvc/enable_esx_shell

vim-cmd hostsvc/start_esx_shell

# Supress ESXi Shell warning

esxcli system settings advanced set -o /UserVars/SuppressShellWarning -i 1

# Disable CEIP

esxcli system settings advanced set -o /UserVars/HostClientCEIPOptIn -i 2

# Configure DNS servers

esxcli network ip dns server add --server=192.168.3.6

# Regenerate the certificate

/sbin/generate-certificates

#Configure NTP

esxcli system ntp set -s=pool.ntp.org

esxcli system ntp set -e=yes

# Reboot ESXi host

rebootThe above file is an example of a KS.CFG. Pretty much any ESXCLI command you would want to run should be available via this script. But for my needs, this is all I am configuring. Additionally, since I am using an ansible playbook to create this file and inject it into the ISO, this file will be dynamically written via variables. As you will see in the playbook below, you actually configure the network settings in a variables file, and this KS.CFG file is configured dynamically for you.

If you’re using the ansible playbook to configure these nested hosts, see the next step on how to set the variables.

Review the ansible

Ok, so we know what the KS.CFG file is used for, now let’s put it all together and automate it.

The automation is simple, and can be run from basically any server with ansible installed. I am using an ubuntu server, ubuntu would work or any linux distro. Probably even windows or macs would work just fine. You need ansible, and a few packages, that’s it. Whatever machine will run the ansible playbook, we’ll call this a jumpbox.

Prepping the Jumpbox:

- Update packages

- Install Ansible

- Install the ansible collection community.vmware

- ansible-galaxy collection install community.vmware

- xorriso – For extracting iso files and recreating the iso

- sudo apt-get install xorriso

- Install Git

- Clone the repo

- git clone https://github.com/canad1an/create-nested-esxi-ansible.git

You can view the github repo here: VMware vSphere Nested ESXi Environment

See the readme for exact steps on what to modify as far as variables go. And that will be all you need to do for this section.

See the automated esxi deployment in action

So i’ll be starting from a clean install to show this demo. All in, it will take about 10 minutes or so to deploy and configure 4 ESXi hosts, based on my testing. That time includes an ESXi install, and 2 reboots.

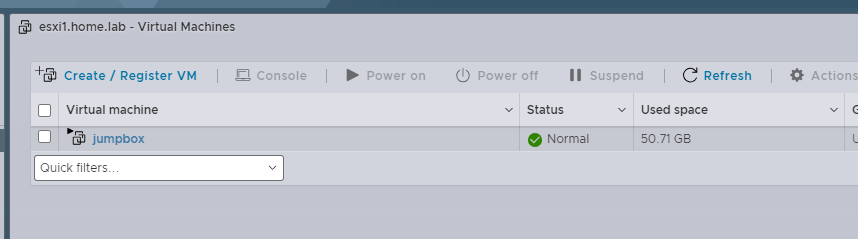

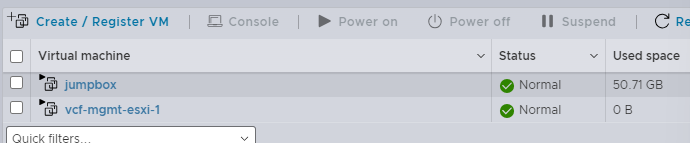

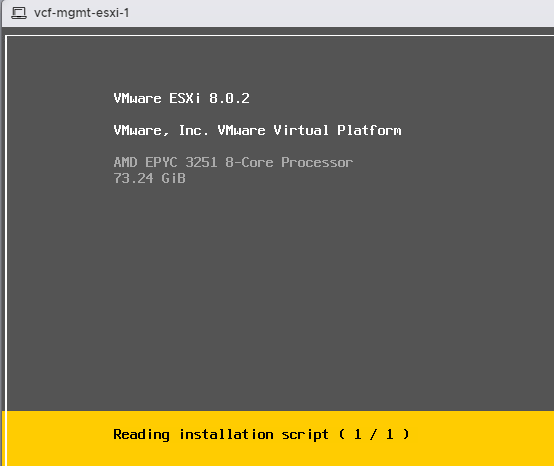

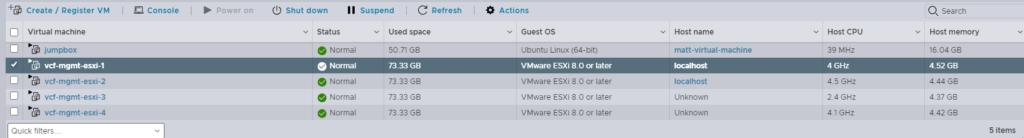

Starting from a clean install as shown above.

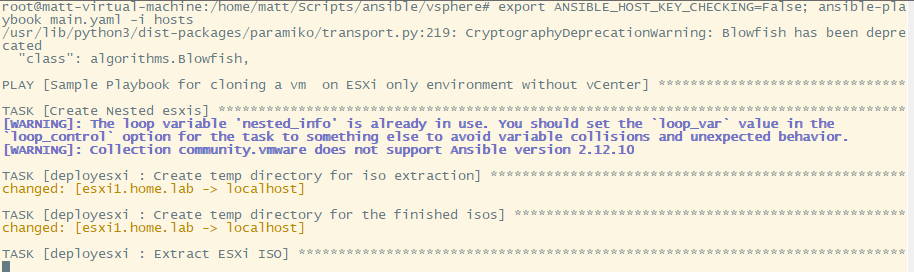

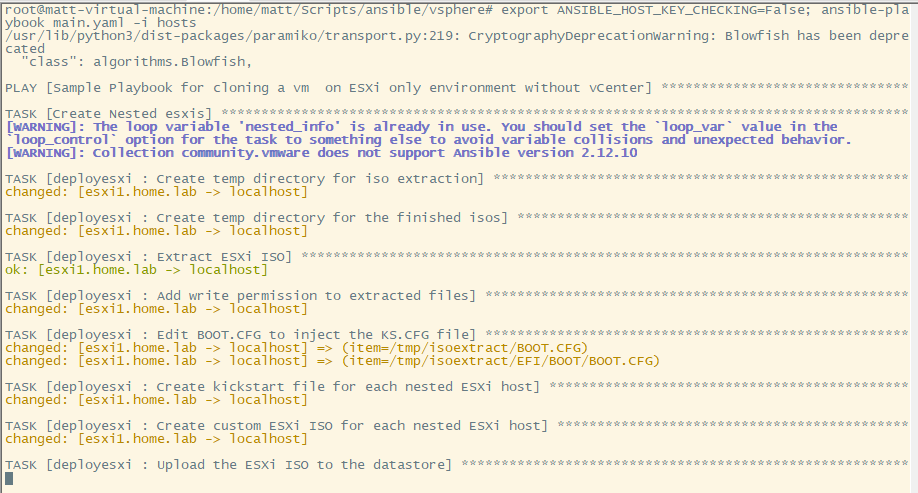

Kicking off the ansible playbook as shown above.

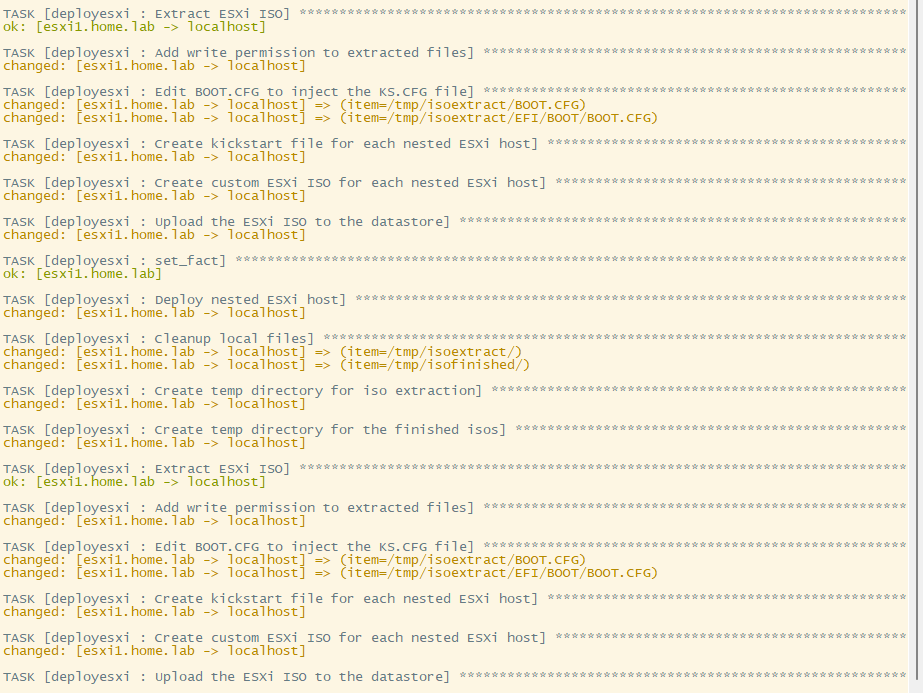

A little pause in the script here while the ISO uploads to the physical esxi host.

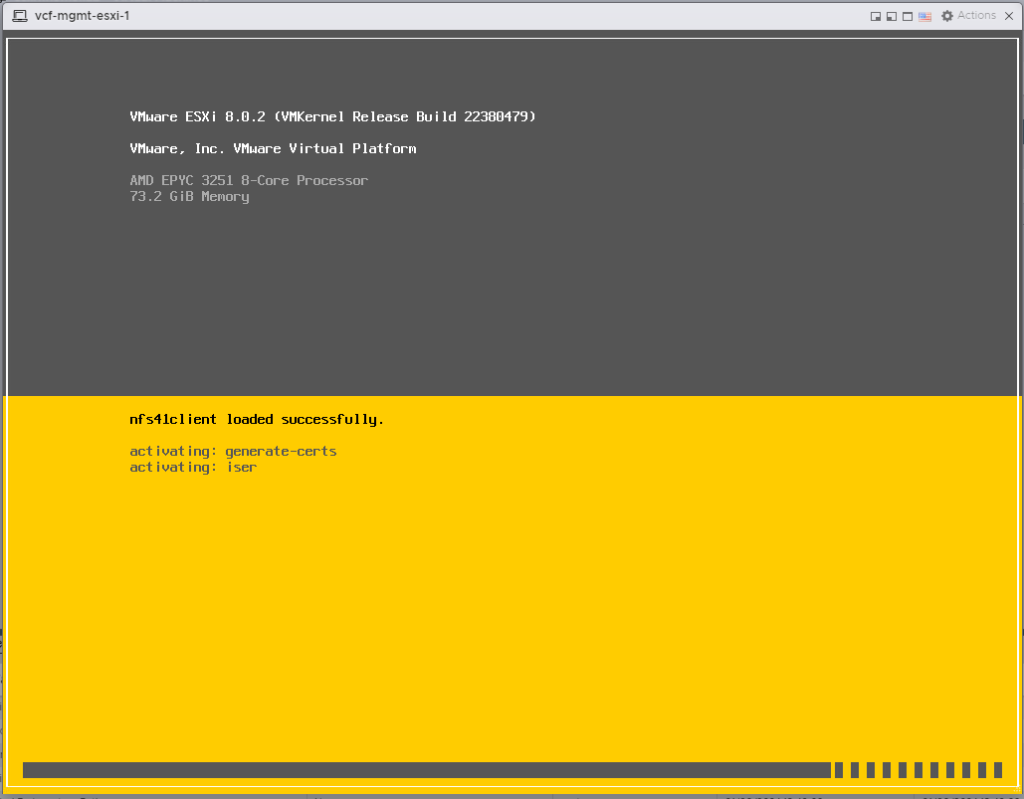

First esxi VM created and booting up, on to the next.

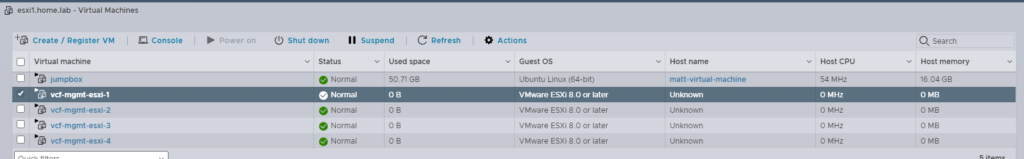

At this point in the script, all 4 VMs have been created and are powered on. They will be starting their installations at the point, and the ansible playbook will pause for 5 minutes for them all to finish the install.

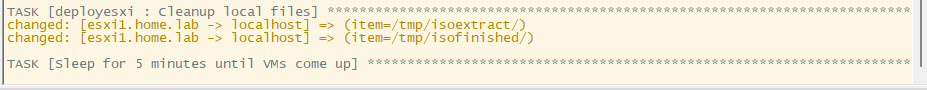

The last step in the ansible playbook is to do some cleanup and remove the ISO files from the physical ESXi.

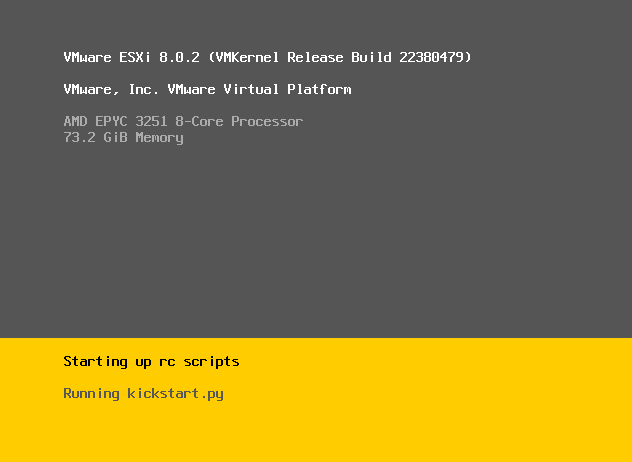

Kicking off the ESXi kickstart script

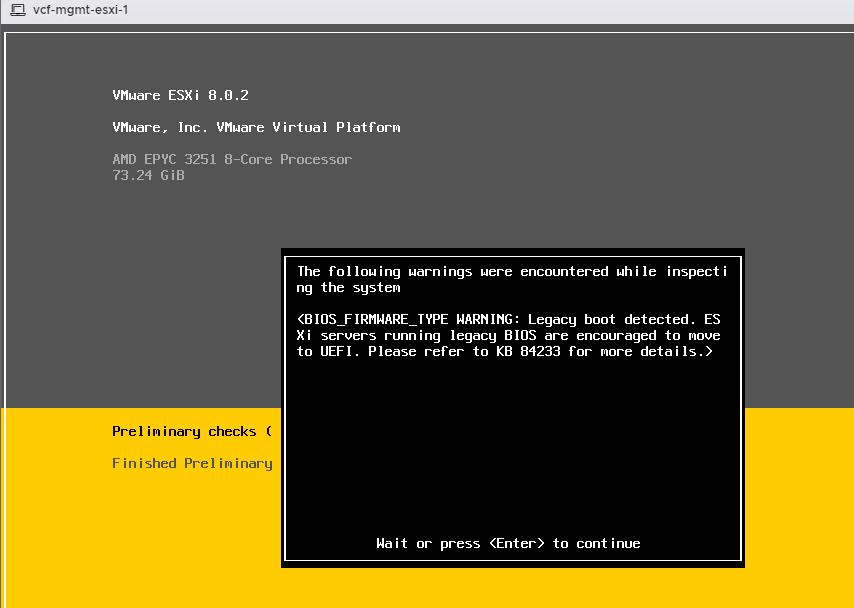

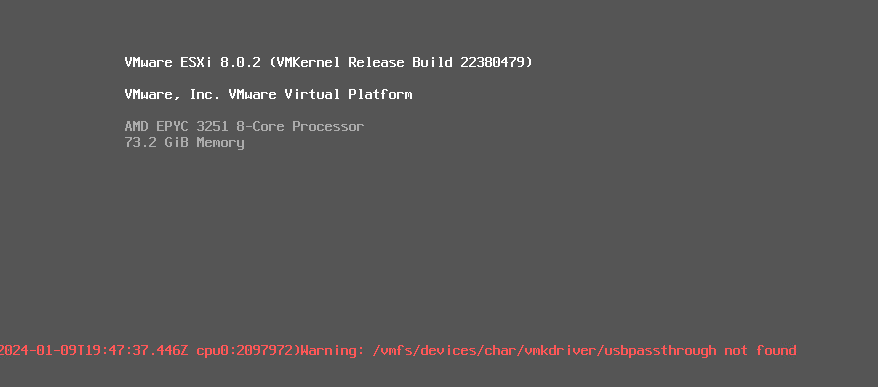

A warning… No idea what it is, but it goes away.

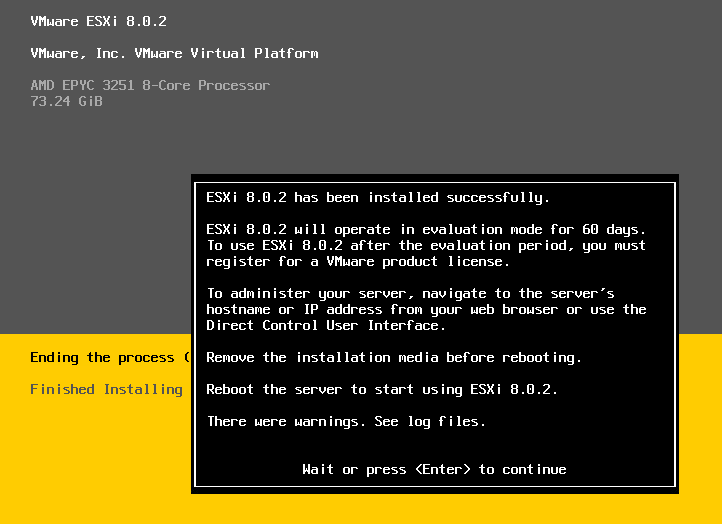

ESXi successfully installed, give it a minute and it will reboot.

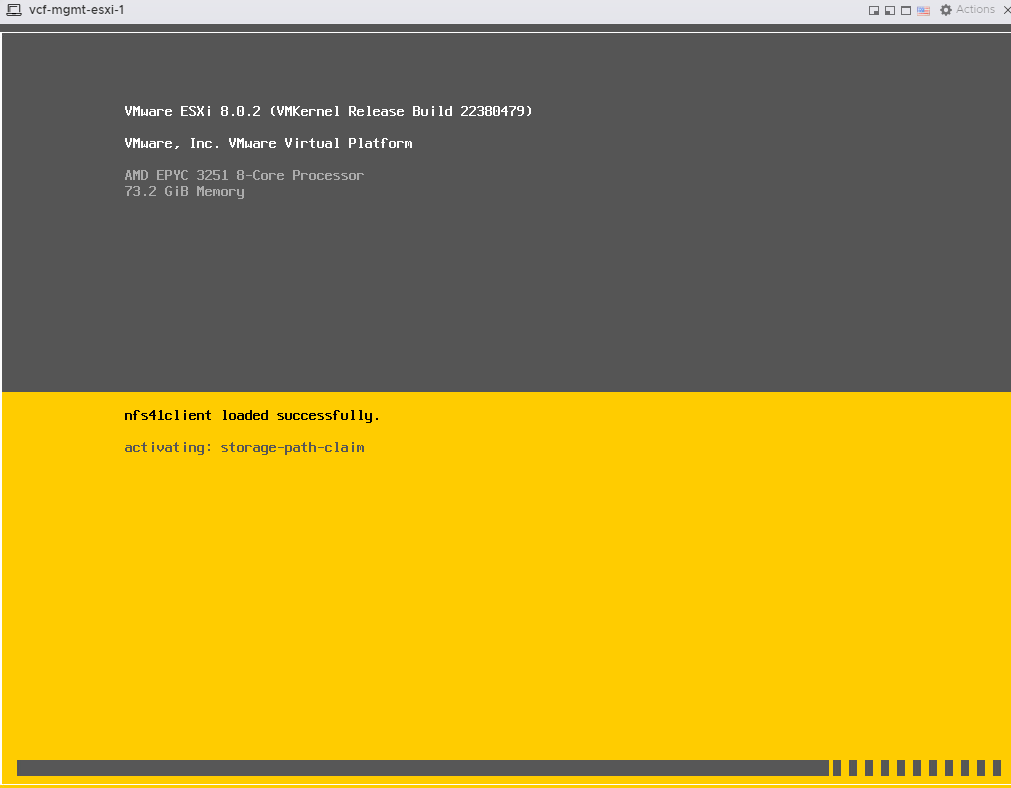

Kicking off part 2 of the kickstart script. Configuration of the network settings.

Another weird warning, wouldn’t worry about it, it goes away.

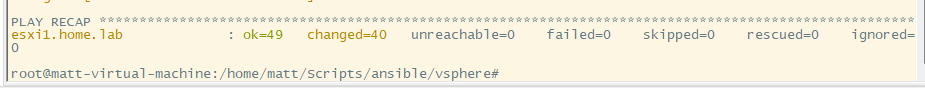

Checking over to the ansible playbook and I see it has finished.

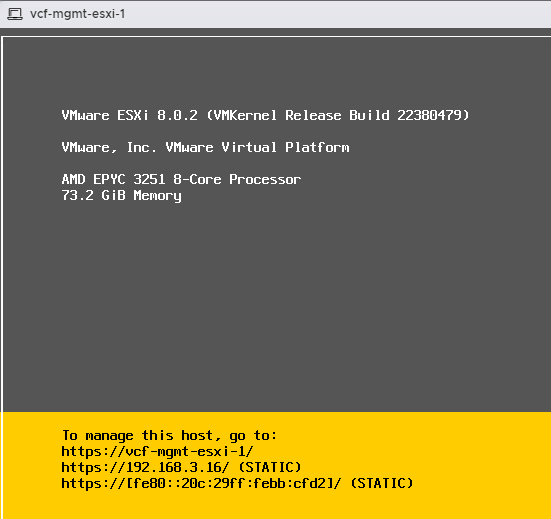

First esxi host is complete and ready. Network settings done, and certificates regenerated. (required for vcf)

Finally after just under 10 minutes, all 4 hosts are ready for nesting.

Is there a way to do a scripted installation on bare metels directly, instead of nested?

You could definitely inject the KS.CFG into any iso and install it directly on a bare metal esxi host. The nested environment just mimics the physical env.

I’m installing ESXi on Dell servers with Ansible using UEFI HTTP boot and the Redfish API (which is supported by iDRAC), it works great.

You don’t even need to build custom ISO files, as the ESXi will search for its “boot configuration file” in a directory named as the MAC address of the host : https://docs.vmware.com/en/VMware-vSphere/7.0/com.vmware.esxi.upgrade.doc/GUID-EA4C5A77-2C88-4519-AB94-E56871EE6DF4.html

Thanks a lot for this script !!!!

Do you have the same for VCSA ?

Thanks